Shenzhen Xunlong Software’s $19.90 “Orange Pi AI Stick Lite” USB stick features a GTI Lightspeeur SPR2801S NPU at up to 5.6 TOPS @ 100MHz. It’s supported with free, Linux-based AI model transformation tools.

Shenzhen Xunlong Software’s Orange Pi project has released an AI accelerator with a USB stick form factor equipped with Gyrfalcon Technology, Inc.’s Lightspeeur SPR2801S CNN accelerator chip. The Orange Pi AI Stick Lite is designed to accelerate AI inferencing using Caffe and PyTorch frameworks, with TensorFlow support coming soon. It’s optimized for use with Allwinner based Orange Pi SBCs, but the SDK appears to be adaptable to any Linux-driven x86 or Arm-based computer with a USB port.

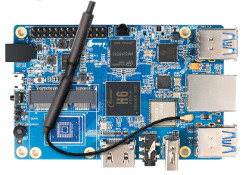

Orange Pi AI Stick Lite

The Orange Pi AI Stick Lite is a relaunch of an almost identical Orange Pi AI Stick 2801 that was announced in Nov. 2018, according to a CNXSoft post. The previous model cost $69 and required purchasing GTI’s PLAI (People Learning Artificial Intelligence) model transformation tools for $149 to do anything more than run a demo. The new device is not only much cheaper at $19.90, but the PLAI training tools are now free. There’s no download button, however — you must contact the company to get the download link.

GTI’s up to 9.3 TOPS per Watt Lightspeeur SPR2801S is a lower-end sibling to the up to 24-TOPS/W Lightspeeur 2803S NPU, which is built into SolidRun’s i.MX 8M Mini SOM. The “best peak” performance of the 2801S is 5.6 TOPS @ 100MHz. It can also run in an “ultra low power” mode of 2.8 TOPS @ 300mW. GTI also offers a mid-range Lightspeeur 2802 model at up to 9.9 TOPS/W.

The 28nm fabricated, 7 x 7mm Lightspeeur SPR2801S has an SDIO 3.0 interface and eMMC 4.5 storage. It offers read bandwidth of 68MB/s and write bandwidth of 84.69 MB/s. The NPU includes a 2-dimensional Matrix Processing Engine (MPE) featuring an APiM (AI Processing in Memory) technology that uses magnetoresistive random access memory (MRAM) …..

sources: http://linuxgizmos.com/orange-pi-ai-stick-lite-taps-5-6-tops-gryfalcon-gpu/