AI systems have been rapidly developed in the past decade with the use of deep learning, neural networks, and large computers to try and simulate neurons. But AI is not the only area of interest when using such techniques; scientists and engineers alike are also keen to try and simulate the human brain to better understand how it works and why.

Simulating the brain is no trivial task. The complexity of the human brain is difficult to replicate, which is part of why the SpiNNaker computer is important.

The Challenges of Simulating a Brain

One of the first fundamental differences between the brain and computers is how their “smallest units” function. Brain neurons can have multiple connections and react to impulses in a range of different ways. Computer transistors, by comparison, are switches that, while can be connected to other transistors, can only have one of two states.

Neurons are also able to forge links between other neurons and react to stimuli differently (which is one definition of “learning”), whereas transistor connections are fixed.

Because of these differences, scientists have to “simulate” neurons and connections in software rather than in hardware, which severely impacts the number of neurons and links that can be simulated simultaneously.

What about simulation neurons in hardware?

Neurons and transistors share little in common but a better comparison would be simple microcontrollers and FPGAs; microcontrollers are akin to neurons in that they can process outside signals quickly while being comparatively simple in architecture while FPGAs provide the ability to break and create connections between microcontrollers.

Could hardware simulation be the key? One team of researchers believes so and has spent the last 12 years on the idea.

The SpiNNaker

A research team at the University of Manchester have spent the last 12 years creating a computer that will simulate neurons and connections with the use of many simple cores all interconnected on a massive parallel system and the computer, called SpiNNaker, was finally turned on.

The million-core computer is designed to simulate up to a billion neurons in real-time to allow scientists to study neural networks and pathways in a realistic manner by using hardware as opposed to software.

Unlike traditional methods for simulating neurons, SpiNNaker has individual processors that each simulate up to 1000 neurons that transmit and receive small packets of data to and from many other neurons simultaneously.

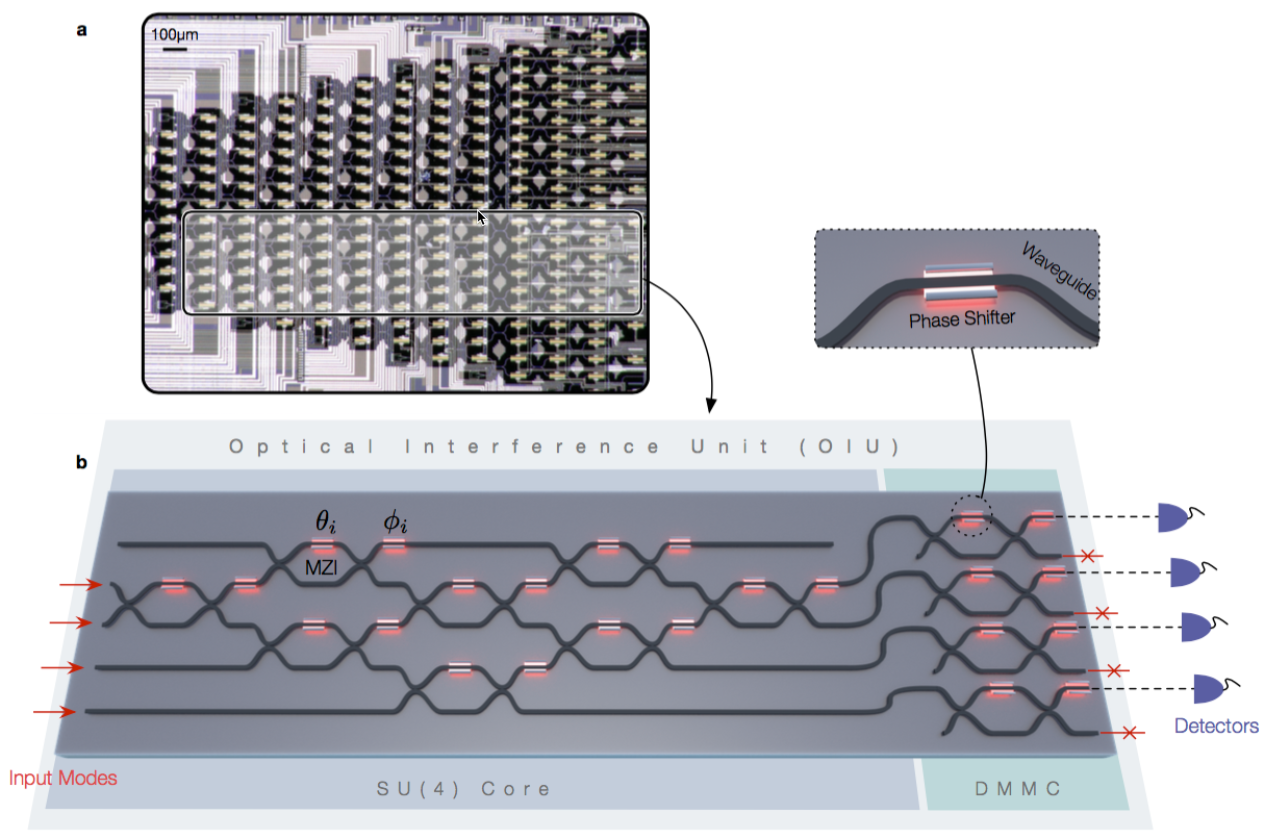

Hexagonal topology between processors and a 48-processor SpiNNaker computer - Image courtesy University of Manchester

The Spiking Neural Network Architecture system (SpiNNaker) consists of 10 19-inch computer racks with each rack containing 100,000 ARM cores. This core density is achieved with the use of a custom IC that contain up to 18 cores. Each board in a rack has 48 chips, which results in each board containing 864 processors.

Unlike typical software systems, the cores are arranged in a hexagonal pattern with data transmission handled entirely in hardware. It is this topology that allows for the system to simulate one billion neurons in real-time. The system uses ARM9 processors containing a total of 7TB of RAM and 57K nodes while each processor has an off-die 128MB SDRAM and each core has 32KB ROM and 64KB data tightly-coupled memory DTCM …

https://www.research.manchester.ac.uk/portal/files/60826558/FULL_TEXT.PDF